G-Sync vs FreeSync: The Future of Monitors

For those of you interested in monitors, PC gaming, or watching movies/shows on your PC, there are a couple recent technologies you should be familiar with: Nvidia’s G-Sync and AMD’s FreeSync.

Basics of a display

Most modern monitors run at 30 Hz, 60 Hz, 120 Hz, or 144 Hz depending on the display. The most common frequency is 60 Hz (meaning the monitor refreshes the image on the display 60 times per second). This is due to the fact that old televisions were designed to run at 60 Hz since 60 Hz was the frequency of the alternating current for power in the US. Matching the refresh rate to the power helped prevent rolling bars on the screen (known as intermodulation).

What the eye can perceive

It is a common myth that 60 Hz was chosen because that’s the limit of what the human eye can detect. In truth, when monitors for PCs were created, they made them 60 Hz, just like televisions; mostly because there was no reason to change it. The human brain and eye can interpret images of up to approximately 1000 images per second, and most people can identify a framerate reliably up to approximately 150 FPS (frames per second). Movies tend to run at 24 FPS (the first movie to be filmed at 48 FPS was The Hobbit: An Unexpected Journey, which got mixed reactions by audiences, some of whom felt the high framerate made the movie appear too lifelike).

The important thing to note is that while humans can detect framerates much higher than what monitors can display, there are diminishing returns on increasing the framerate. For video to look smooth, it really only needs to run at a consistent 24+ FPS, however, the higher the framerate, the clearer the movements onscreen. This is why gamers prefer games to run at 60 FPS or higher, which provides a very noticeable difference when compared to games running at 30 FPS (which was a standard for the previous generation of console games).

How graphics cards and monitors interact

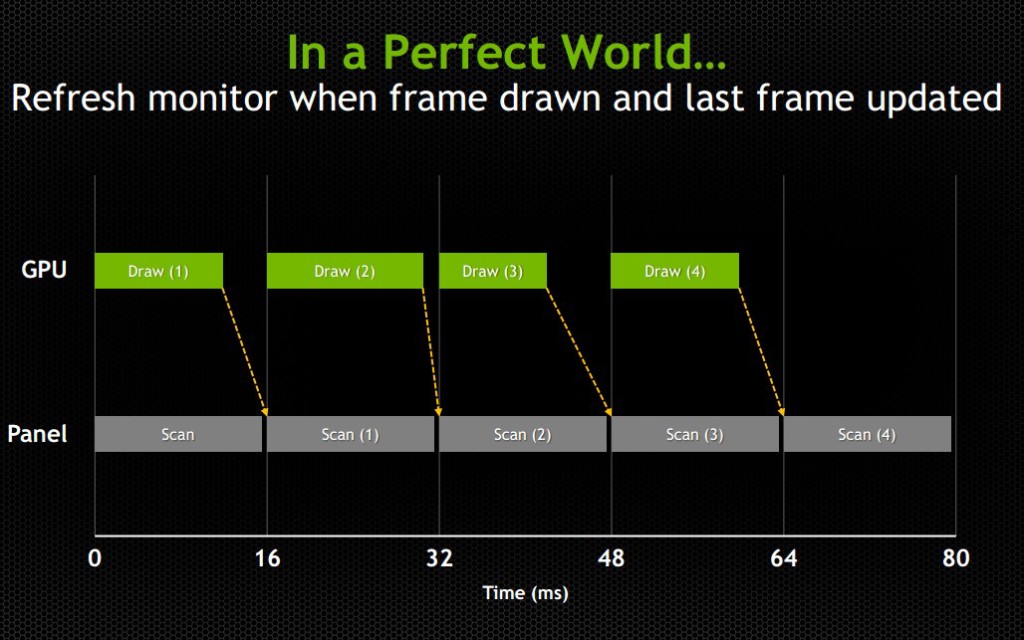

An issue with most games is that while a display will refresh 60 times per second (60 Hz) or 120 or 144 or whatever the display can handle, the images being sent from the graphics card (GPU) to the display will not necessarily come at the same rate or frequency. Even if the GPU sends 60 new frames in 1 second, and the display refreshes 60 times in that second, it’s possible that the time variance between each frame rendered by the GPU will be wildly different.

Explaining frame stutter

This is important, because while the display will refresh the image every 1/60th of a second, the time delay between one frame and another being rendered by the GPU can and will vary.

Consider the following example: The running framerate of a game is 60 FPS, considered a good number. However, the time delay between half of the frames is 1/30th of a second and the other half has a delay of 1/120th of a second. In the case of it being 1/30th of a second, the monitor will have refreshed twice in that amount of time, having shown the previous image twice prior to receiving the new one. In the case of it being 1/120th of a second, the second image will have been rendered by the GPU prior to the display refreshing, so that image will never even make it to the screen. This introduces an issue known as micro-stuttering and is clearly visible to the eye.

This means that even though the game is being rendered at 60 frames per second, the frame rendering time varies and ends up not looking smooth. Micro-stuttering can also be caused by systems that utilize multiple GPUs. When frames rendered by each GPU don’t match up in regards to timing, it causes delays as described above, which produces a micro-stuttering effect as well.

Explaining frame lag

An even more common issue is that framerates rendered by a GPU can drop below 30 FPS when something is computationally complex to render, making whatever is being rendered look like a slideshow. Since not every frame is equally simple or complex to render, framerates will vary based on the frame. This means that even if a game is getting an average framerate of 30 FPS, it could be getting 40 FPS for half the time you’re playing and 20 FPS for the other half. This is similar to the frame time variance discussed in the paragraph above, but rather than appear as micro-stutter, it will make half of your playing time miserable, since people enjoy video games as opposed to slideshow games.

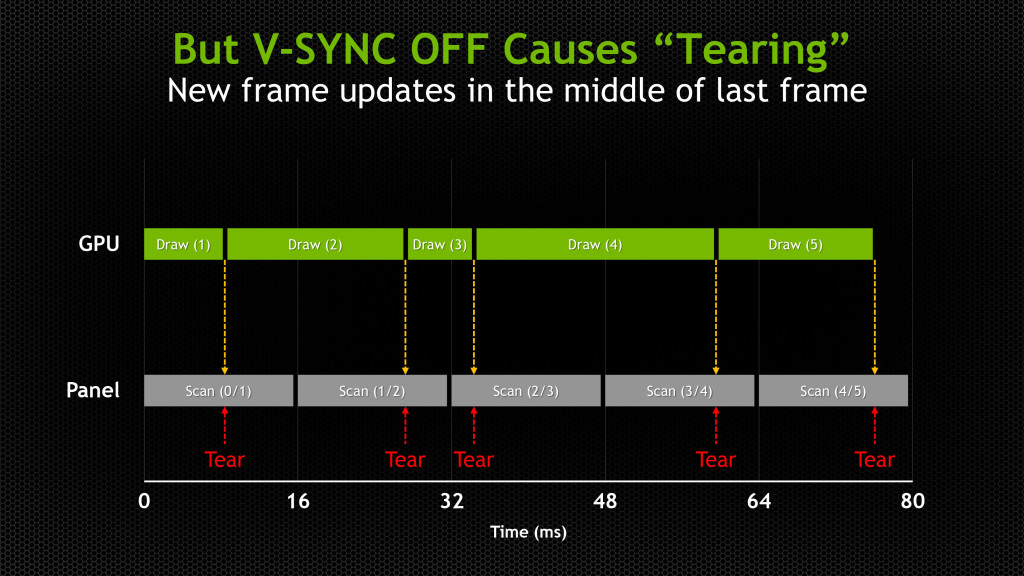

Explaining tearing

Probably the most pervasive issue though is screen tearing. Screen tearing occurs when the GPU is partway through rendering another frame, but the display goes ahead and refreshes anyways. This makes it so that part of the screen is showing the previous frame, and part of it is showing the next frame, usually split horizontally across the screen.

These issues have been recognized by the PC gaming industry for a while, so unsurprisingly some solutions have been attempted. The most well-known and possibly oldest solution is called V-Sync (Vertical Sync), which was designed mostly to deal with screen tearing.

V-Sync

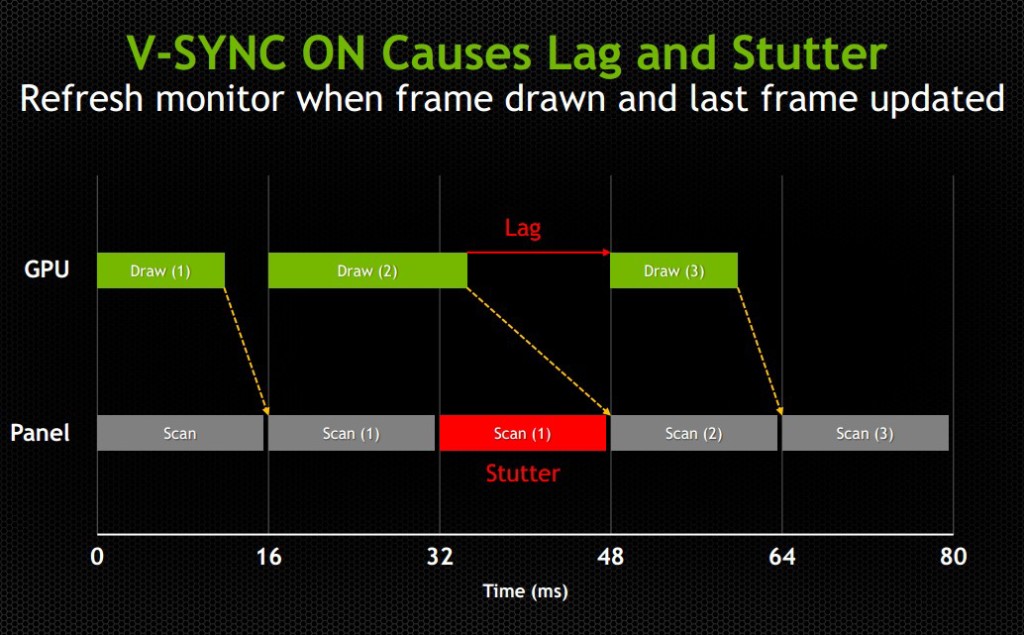

The premise of V-Sync is simple and intuitive – since screen tearing is caused by a GPU rendering frames out of time with the display, it could be solved by syncing the rendering/refresh time of the two. Displays run at 60 Hz, so when V-Sync is enabled, the GPU will only output 60 FPS, designed to match the display.

However, the problem here should be obvious, as it was discussed earlier in this article: just because you tell the GPU to render a new frame at a certain time, it doesn’t mean it will have it fresh out of the oven for you in time. This means that the GPU is struggling to match pace with the display, but in some cases it will easily match (such as in cases where it would normally render over 60 FPS), whereas in others it can’t keep up (such as in cases where it would normally render under 60 FPS).

While V-Sync fixes the issue of screen tearing, it adds a new problem: any framerate under 60 FPS means that you’ll be dealing with stuttering and lag onscreen, since the GPU will be choking on trying to match render time with the display’s refresh time. Without V-Sync on, 40-50 FPS is a perfectly reasonable and playable framerate, even if you experience tearing. With V-Sync on, 40-50 FPS is a laggy unplayable mess.

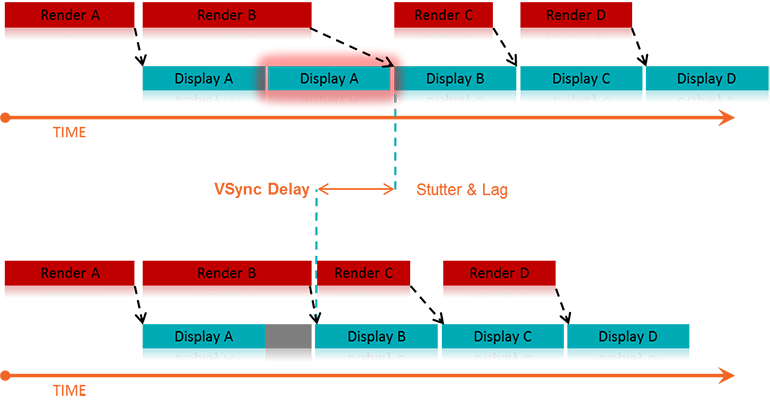

For some odd reason, while the idea to match the GPU render rate to the display’s refresh rate has been around since V-Sync was introduced years ago, no one thought to try the other way around until last year: matching the display’s refresh rate to the GPU’s render rate. Surely it seems like an obvious solution, but I suppose the idea that displays had to render at 60, 120, or 144 Hz was taken as a given.

The idea seems much simpler and straightforward. Matching a GPU’s render rate to a display’s refresh rate requires the computational horsepower on the GPU to keep pace with a display that does not care about how complex a frame is to render, and just chugs on at 60+ Hz. Matching a display’s refresh rate to a GPU’s render rate only requires the display to respond to a signal sent from the GPU to refresh when a new frame is sent.

This means that any framerate above 30 FPS should be extremely playable, resolving any tearing issues found when playing without V-Sync, and resolving any stuttering or lag issues caused by having lower than 60 FPS when playing with V-Sync. Suddenly the range of 30-60 FPS becomes much more playable, and in general gameplay and on screen rendering becomes much smoother

The modern solution

This solution comes in two forms: G-Sync – a proprietary design created by Nvidia; and FreeSync – an open standard designed by AMD.

G-Sync

G-Sync was introduced towards the end of 2013, as an add-on module for monitors (first available in early 2014). The G-Sync module is a board made using a proprietary design, which replaces the scaler in the display. However, the G-Sync module doesn’t actually implement a hardware scaler, leaving that to the GPU instead. Without getting into too much detail about how the hardware physically works, suffice it to say the G-Sync board is placed along the chain in a spot where it can determine when/how often the monitor draws the next frame. We’ll get a bit more in depth about it later on in this article

The problem with this solution is that this either requires the display manufacturer to build their monitors with the G-Sync module embedded, or requires the end user to purchase a DIY kit and install it themselves in a compatible display. In both cases, extra cost is added due to the necessary purchase of proprietary hardware. While it is effective, and certainly helps Nvidia’s bottom line, it dramatically increases the cost of the monitor. Another note of importance: G-Sync will only function on PCs equipped with Nvidia GPUs newer/higher end than the GTX 650 Ti. This means that anyone running AMD or Intel (integrated) graphics cards is out of luck.

FreeSync

FreeSync on the other hand was introduced by AMD at the beginning of 2014, and while Adaptive-Sync (will be explained momentarily) enabled monitors have been announced, they have yet to hit the market as of the time of this writing (January 2015). FreeSync is designed as an open standard, and in April of 2014, VESA (the Video Electronics Standards Association) adopted Adaptive-Sync as part of its specification for DisplayPort 1.2a.

Adaptive-Sync is a required component of AMD’s FreeSync, which allows the monitor to vary its refresh rate based on input from the GPU. DisplayPort is a universal and open standard, supported by every modern graphics card and most modern monitors. However, it should be noted that while Adaptive-Sync is considered part of the official VESA specification for DisplayPort 1.2a and 1.3, it is important to remember that it is optional. This is important because it means that not all new displays utilizing DisplayPort 1.3 will necessarily support Adaptive-Sync. We very much hope they do, as a universal standard would be great, but including Adaptive-Sync introduces some extra cost in building the display mostly in the way of validating and testing a display’s response.

To clarify the differences, Adaptive-Sync refers to the DisplayPort feature that allows for variable monitor refresh rates, and FreeSync refers to AMD’s implementation of the technology which leverages Adaptive-Sync in order to display frames as they are rendered by the GPU.

AMD touts the following as the primary reasons why FreeSync is better than G-Sync: “no licensing fees for adoption, no expensive or proprietary hardware modules, and no communication overhead.”

The first two are obvious, as they refer to the primary downsides of G-Sync – proprietary design requiring licensing fees, and proprietary hardware models that require purchase and installation. The third reason is a little more complex.

Differences in communication protocols

To understand that third reason, we need to discuss how Nvidia’s G-Sync model interacts with the display. We’ll provide a relatively high level overview of how that system works and compare it to FreeSync’s implementation.

The G-Sync module modifies the VBLANK interval. The VBLANK (vertical blanking interval) is the time between the display drawing the end of the final line of a frame and the start of the first line of a new frame. During this period the display continues showing the previous frame until the interval ends and it begins drawing the new one. Normally, the scaler or some other component in that spot along the chain determines the VBLANK interval, but the G-Sync module takes over that spot. While LCD panels don’t actually need a VBLANK (CRTs did), they still have one in order to be compatible with current operating systems which are designed to expect a refresh interval.

The G-Sync module modifies the timing of the VBLANK in order to hold the current image until the new one has been rendered by the GPU. The downside of this is that the system needs to poll repeatedly in order to check if the display is in the middle of a scan. In the case that it is, the system will wait until the display has finished its scan and will then have it render a new frame. This means that there is a measurable (if small) negative impact on performance. Those of you looking for more technical information on how G-Sync works can find it here.

FreeSync, as AMD pointed out, does not utilize any polling system. The DisplayPort Adaptive-Sync protocols allow the system to send the signal to refresh at any time, meaning there is no need to check if the display is mid-scan. The nice thing about this is that there’s no performance overhead and the process is simpler and more streamlined.

One thing to keep in mind about FreeSync is that, like Nvidia’s system, it is limited to newer GPUs. In AMD’s case, only GPUs from the Radeon HD 7000 line and newer will have FreeSync support.

(Author’s Edit: To clarify, Radeon HD 7000 GPUs and up as well as Kaveri, Kabini, Temash, Beema, and Mullins APUs will have FreeSync support for video playback and power-saving scenarios [such as lower framerates for static images].

However, only the Radeon R7 260, R7 260X, R9 285, R9 290, R9 290X, and R9 295X2 will support FreeSync’s dynamic refresh rates during gaming.)

Based on these things, one might come to the immediate conclusion that FreeSync is the better choice, and I would agree with you, though there are some pros and cons of both to consider (I’ll leave out the obvious benefits that both systems already share, such as smoother gameplay).

Pros and Cons

Nvidia G-Sync

Pros:

- Already available on a variety of monitors

- Great implementation on modern Nvidia GPUs

Cons:

- Requires proprietary hardware and licensing fees, and is therefore more expensive (the DIY kit costs $200, though it’s probably a lower cost to display OEMs)

- Proprietary design forces users to only use systems equipped with Nvidia GPUs

- Two-way handshake for polling has a negative impact on performance

AMD FreeSync

Pros:

- Uses Adaptive-Sync which is already part of the VESA standard for DisplayPort 1.2a and 1.3

- Open standard means no extra licensing fees, so the only extra costs for implementation are compatibility/performance testing (AMD stated that FreeSync enabled monitors would cost $100 less than their G-Sync counterparts)

- It can send a refresh signal at any time, there’s no communication overhead since there’s no need for a polling system

Cons:

- While G-Sync monitors have been available for purchase since early 2014, there won’t be any FreeSync enabled monitors until March 2015

- Despite it being an open standard, neither Intel nor Nvidia has even announced support to make use of the system (or even Adaptive-Sync for that matter), meaning for now it’s an AMD-only affair

Final thoughts

My suspicion is that FreeSync will eventually win out, despite the fact that there’s already some market share for G-Sync. The reason for this is that Nvidia does not account for 100% of the GPU market, and G-Sync is limited only to Nvidia systems. Despite reluctance from Nvidia, it will likely eventually support Adaptive-Sync and will release drivers that will make use of it. This is especially true because displays with Adaptive-Sync will be significantly more affordable than G-Sync enabled monitors, and will perform the same or better.

Eventually Nvidia will lose this battle, and when they finally give in, that will be when consumers benefit the most. Until then, if you have an Nvidia GPU and want smooth gameplay now, there are plenty of G-Sync enabled monitors on the market now. If you have an AMD GPU, you’ll see your options start to open up within the next couple of months. In any case, both systems provide a tangible improvement for gaming and really any task that utilizes real-time rendering, so either way in the battle between G-Sync vs FreeSync, you win.

just want to point out an error in your article about free-sync.

All AMD Radeon™ graphics cards in the AMD Radeon™ HD 7000, HD 8000, R7 or R9 Series will support Project FreeSync for video playback and power-saving purposes. The AMD Radeon™ R9 295X2, 290X, R9 290, R9 285, R7 260X and R7 260 GPUs additionally feature updated display controllers that will support dynamic refresh rates during gaming.

AMD APUs codenamed “Kaveri,” “Kabini,” “Temash,” “Beema” and “Mullins” also feature the necessary hardware capabilities to enable dynamic refresh rates for video playback, gaming and power-saving purposes. All products must be connected to a display that supports DisplayPort Adaptive-Sync.

It is our current understanding that the software architecture of select games may not be compatible with dynamic refresh rate technology like Project FreeSync. In these instances, users will be able to toggle the activation of FreeSync in the AMD Catalyst™ driver.

Straight From AMD

You’re right, I’ll update the article to reflect that. When I was writing the list of supported GPUs, I forgot that AMD differentiated between GPU that will only support the video playback and power saving features and the GPUs that will support the full range of FreeSync (including the dynamic refresh rate).

What nVidia has to say about why G-Sync is better? They have kept the price so there has to be something behind it. And yes its more expensive for now, but can’t they just drop the licensing price close to zero if needed? Its not like the actual hardware is super expensive sci-fi stuff.

What I have experienced with G-Sync it limits work of the GPU to the max FPS of the display. Meaning GPU does less stuff and makes less heat and noice if screen cannot draw faster anyway. If I understood correctly with FreeSync GPU still spams the monitor as much as it can, and the framerate is likely to be more variable even if the actual drawing would be tearing-free. With FreeSync the GPU cannot know what the screen is doing. Does it matter? Who knows? Side-by-side real world comparisons remain to be seen.

Excellent article!

I understand Nvidia has more market share when it comes to their GPUs and their GPUs use less power. This may be a deciding factor for some when choosing between a display option.

Too bad Nvidia won’t adapt FreeSync anytime soon. I may just have to purchase an AMD GPU.